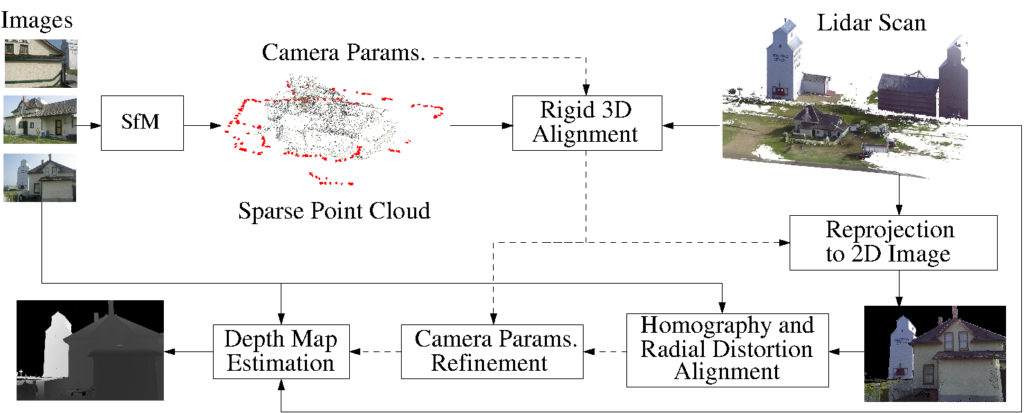

We present a novel framework for precisely estimating dense depth maps by combining 3D lidar scans with a set of uncalibrated camera RGB color images for the same scene. Rough estimates for 3D structure obtained using structure from motion (SfM) on the uncalibrated images are first co-registered with the lidar scan and then a precise alignment between the datasets is estimated by identifying correspondences between the captured images and reprojected images for individual cameras from the 3D lidar point clouds. The precise alignment is used to update both the camera geometry parameters for the images and the individual camera radial distortion estimates, thereby providing a 3D-to-2D transformation that accurately maps the 3D lidar scan onto the 2D image planes. The 3D to 2D map is then utilized to estimate a dense depth map for each image.

- Lidar and SfM: Complementary Nature

| Pros | Cons | |

| Lidar | High accuracy | Low resolution |

| Untextured regions | Time-consuming | |

| SfM | High resolution | Low accuracy |

| Simple operation | Texture required |

- Sample Results

Sample results for dense depth map estimation. The first row shows five input RGB images (3888×2592 pixels) captured using a digital camera, and the second row shows the corresponding depth map generated by our method.

- Publication