|

This project is supported by the National Science Foundation under grant No. 1741472, titled "BIGDATA: F: Audio-Visual Scene Understanding". |

|

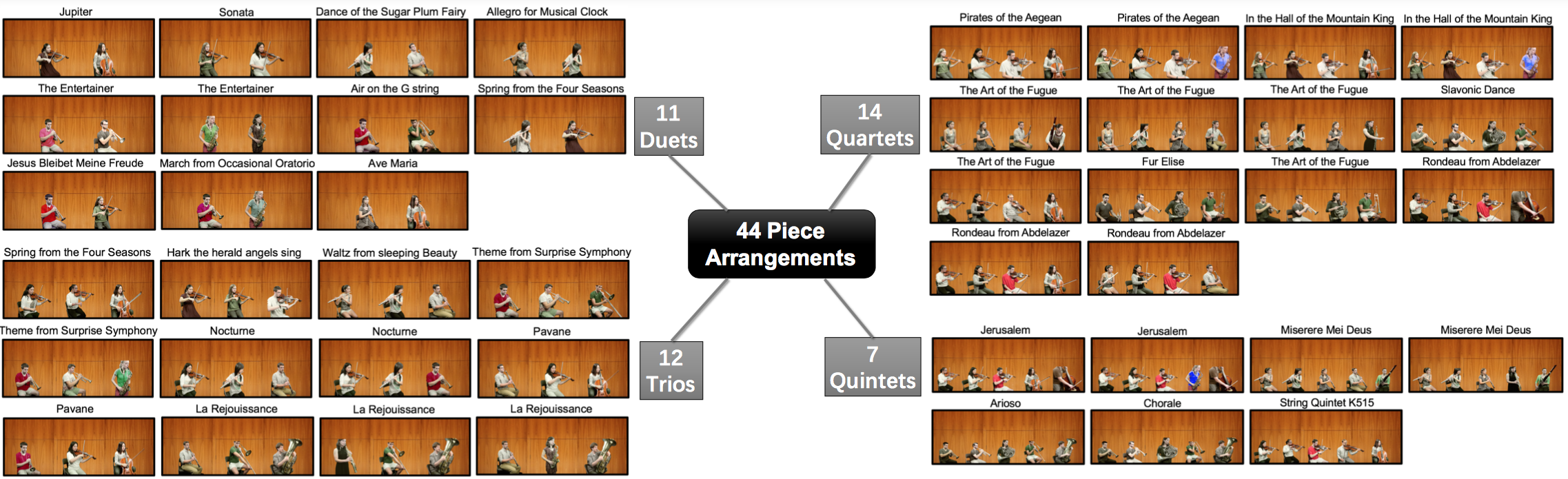

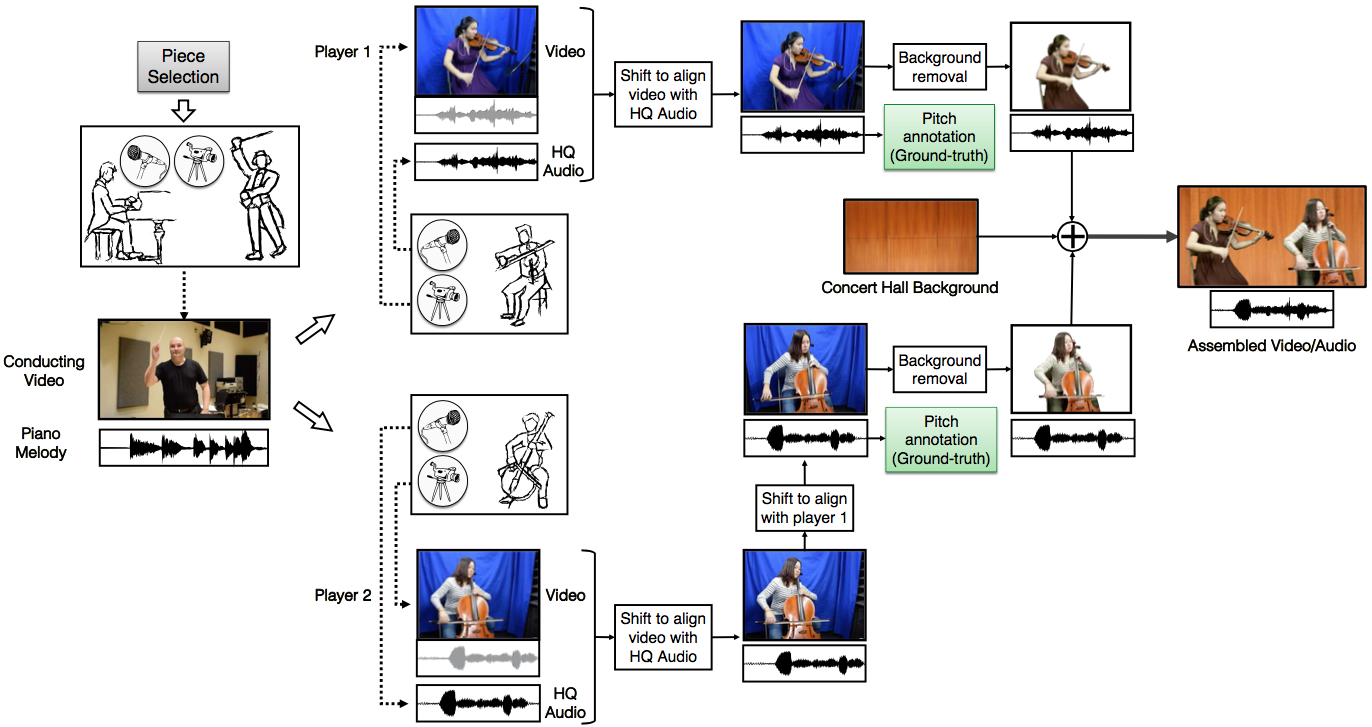

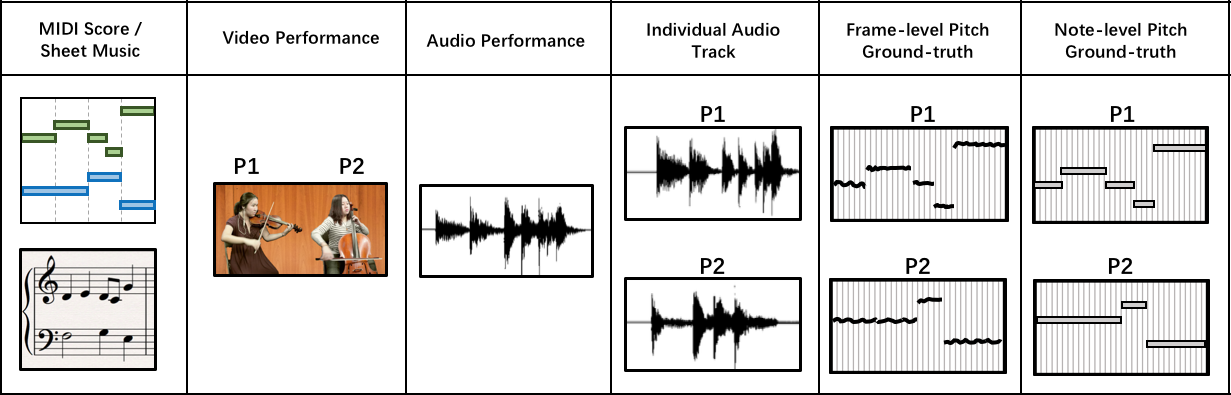

We introduce a dataset for facilitating audio-visual analysis of musical performances. The dataset comprises a number of simple multi-instrument musical pieces assembled from coordinated but separately recorded performances of individual tracks. For each piece, we provide the musical score in MIDI format, the high-quality individual instrument audio recordings and the videos of the assembled pieces. We anticipate that the dataset will be useful for multi-modal information retrieval techniques such as music source separation, transcription, performance analysis and also serve as ground-truth for evaluating performances.

The dataset is organized as 44 folders for the 44 pieces. For each piece folder we have the following files:

The pitch/note annotations of all the tracks can be visualized here:

(Just for annotation quality check. No need to download.)One example from all the 44 pieces in the dataset:

Video performance

Individual audio tracks with ground-truth pitch/note annotations

Download the data folder for this sample piece, including video, audio, annotations, musical scores

Download the dataset documentation file separately

Download the whole dataset package (12.5GB) (NOW AVAILABLE!)